I’ve been preaching that you should release early and often. I’ve also underlined the importance of learning from customers. Backlog priorities based on data rather than your intuition only. “If you release and you’re not embarrassed, you're too late”, I’ve been repeating. Others at Flowa share this mindset.

Lately, I’ve had a great opportunity to experiment if we are able to practice as we preach. In December, my colleague Tero started writing a small app to support his own meditation practice. There was not a nice app to support reflection after meditation.

Meditation apps seemed to offer a nice timer, guided meditation and some light gamification to maintain your interest. They are good when you start, but once you want to increase your meditation amount from 20 minutes per day to, say, 360 hours per year – apps won’t support you. Neither they help you to overcome common challenges you face after the first year or two of practice. For instance, there is no support against uncertainty and anxiety related to your practice.

I guess every seasoned meditator struggles with doubts and uncertainty at least sometimes. Is it really worth the time to sit every day for tens of minutes even if often there is no observable progress for weeks? Tero’s idea, Zen Metrics was to offer a solution to this. It offers tools to make the progress visible.

This is a story of the early days of our app, and how we developed it.

From a pet project to a SaaS service

The beginning was – well, not quite like in the book: Zen Metrics started as a pet project. Within just a few days we converted it into a testable experiment with a guess about the customer problem and unique value proposition. And then we tested if it worked.

I think Tero told about this project for the first time in November. I didn’t get the idea fully, and I was skeptical about monetization ideas. Anyway, the idea sounded cool. A month later, Tero said that he’s going to build an MVP (minimal viable product) anyway. In the worst case, it’s good practice and he gets a tool that he needs. We thumbed up: if something inspires you and you can make it real by yourself, just do it. And I’m happy that Tero decided to do so.

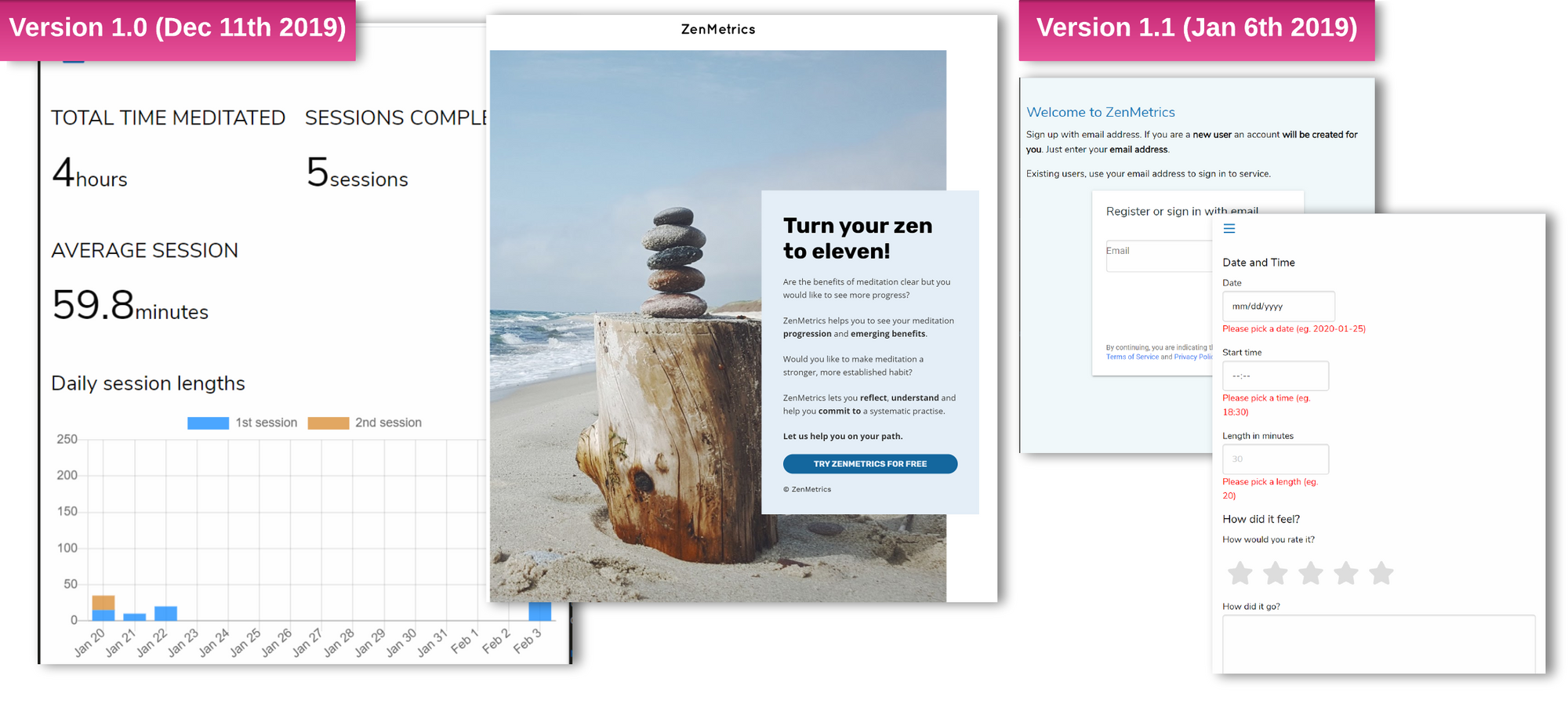

The first experiment: Release MVP and the landing page

I’m not sure how long it took for Tero to realize the first version. In calendar time it took approximately two weeks while working on a client project at the same time. I’m a bit embarrassed that Tero wrote it partially during the weekend, but then again, I probably would have done so as well in the situation we were in December.

I got in after Tero and Juha had already created a landing page and set up the first GoogleAds campaign. We didn’t use much money to advertise – just enough to learn whether the landing page works and convert leads to the app. It did. Obviously people liked our value proposition. We also promoted app in two work related Slack teams and got a few users there.

The second experiment: Improve registration and visual look'n'feel

We now had a way to attract potential users. It seemed that others had the same problem as Tero had, and they were seeking a solution to it. In start up jargon this is commonly called “problem/solution fit”. That is, we are working with a real problem, where people are seeking a solution and might be interested in paying for it. People found our page by Google and registered and the conversion rates were better than average even if our app was far from the vision Tero had. For sure, the evidence about problem/solution fit is weak and initial, and we had no evidence if they would pay for it. At least, we have some confidence and we had invested less than 50 hours to development and design in total. Nonetheless, acquiring customers definitely was no longer the biggest bottleneck.

Next it seemed that many people dropped out just before registering. My guess was that maybe the visual look’n’feel was not appealing enough. Juha had a theory that “email + password” -registration and authentication was too clumsy. Usually we prefer just one change at time. We decided to do them both because adding Facebook and Google authentication was very easy. We also had numerous other ideas, but they didn’t primarily address the main bottleneck – too few sign up after clicking “Try it out” button on the landing page.

The third experiments: activation and feedback mails

The bottleneck moved from registration to activation and retention. There were only a few users who had added entries. This is the problem we are still struggling with. Yet, we've made clear progress.

One and a half weeks ago we asked existing users for feedback and a bit later we sent an activation mail. We had not implemented any mailing support and sending mails is still mostly manual. We use Drip, but there is no automation between the app and Drip. Until we have hundreds of users, it’s not a big deal to send the mails manually.

We expected that most of the users wouldn't give feedback or to become active users. The results were a bit below expectations but nonetheless this had an impact.

The fourth experiment: video interviews

There’s another experiment we have been working on during the last two weeks: We interviewed a couple of seasoned meditators who had not yet used our app or seen the landing page. The goal was to better understand our target customer segment.

A common misconception is that you need to have a large number of interviews until they are useful. This is simply not true. Only a few deep and open interviews help a lot.

I believe that the key is how open you as an interviewer are in listening to what the interviewees are saying. To put it bluntly, it’s really hard to keep your own shit separate from the interviewees thoughts and point of views. For instance, years ago I ran a number of interviews using Ash Maurya’s Running Lean approach. It didn’t help me listen carefully. Instead, I had a tendency to just seek validation for my hypothesis, and it limited my ability to learn. Last autumn, I started to experiment with the format from IDEO's design thinking toolbox. Not only did I get a lot better result – I also felt a lot better. I really enjoyed every interview.

After four interviews, we have pretty good, initial guesses who needs this kind of app.

Our early adopter...

- ...has meditated many years with varying activity.

- ...meditates to develop themselves, rather than due to an esoteric or religious motive.

- ...knows at least approximately what kind of benefits meditations have according to scientific studies.

- ...is interested in psychology or neuroscience or both (at least a bit).

- ...thinks that the relation between meditation and holistic well-being is analogous to physical exercise. While meditation brings help for instance, to stress, anxiety, chronic pain and sleeplessness, if you start when you have a problem and you need a “pain killer”, the impact of the practice will be a fraction of what it could be. To get full benefits of meditation, you most likely need to exercise diligently for years, not just when you have a problem meditation brings some relief from.

- ...finds guided meditations unnecessary or even unuseful. If a user already uses another meditation app, that app works rather as a timer. The user no longer seeks guided meditations, let alone needs them. It is okay to have a few instructions and reminders in the beginning or after the meditation, but instruction during the meditation more often distracts than helps.

The fifth experiment: Milestones

Our fifth and latest experiment was to implement “milestones”. After all, a crucial part of the vision was to increase confidence and motivation to practice diligently. Adding milestones now was also a potential solution to the primary problem: Lack of retention.

We published the feature this week on Monday, and I cannot tell if it was what users love and want. (Me and Tero love it for sure.)

Our hypothesis is that the number of recurring new users raises. In the beginning, we had no users who had created more than one entry. Now we have a few. Our experiment is successful, if we soon have a dozen active users. That is, 25-30% of all users are active and of new users most become active.

At least, we now get encouraging feedback such as “Looking good! Using it every day! [...] Please roll on!”

What next?

There are a lot of known unknowns: We are developing a sports tracker for the mind. While the markets for sport trackers and biohacking are increasing and meditation is getting increasingly common, we don't know if there will ever be significant markets for this kind of tracking tool.

We know that there is a need for a tool that solves the problem we try to solve, but we don’t know yet how to solve it well enough. If we knew, our conversion rates would look different. We also don’t know how many people have the problem and how significant this problem is.

It would have been easier if we had a really good and fact-based guess about what kind of solution is good enough, but we don’t. Second best thing is to get validation without building anything. It’s easy when the users can imagine the solution, but practically impossible if they cannot. For instance, I don’t think people would have really got it how Facebook solves problems related to communication and creating communities without seeing the first version. Third best thing is to create a bare bones app and find out if people like it or not with minimum effort. We chose this path.

Probably the easiest way to tackle some of the known unknowns is to go out of the office and talk with meditators. We have some guesses which features might be critical for success, but before building them it's time to check if our guesses are correct. Our main focus within next weeks will be on building better customer understanding.

How about profit?

No revenue yet. This is definitely a topic we have had heated debates. In our case, we don’t yet have a problem/solution, let alone product/market fit. We have an increasing amount of evidence for a problem/solution but not enough.

Product/market fit is start up jargon. It basically means that you solve a real problem and not only are people willing to pay for it, but there are paying customers in great numbers. In addition, you can easily reach the potential new customers and happy users do the marketing for you.

Even if we don’t have a problem/solution fit yet, I believe that it's a good investment to use some more time to find it. I’m partly biased as I personally like the app, and it’s useful for myself. Anyway, it would be cool to make it profitable.

I’m also pretty sure that we are not far from problem/solution fit. Once we have clear indication that the solution makes most new users happy then the next problem is to figure out how to make at least one user to pay for it.

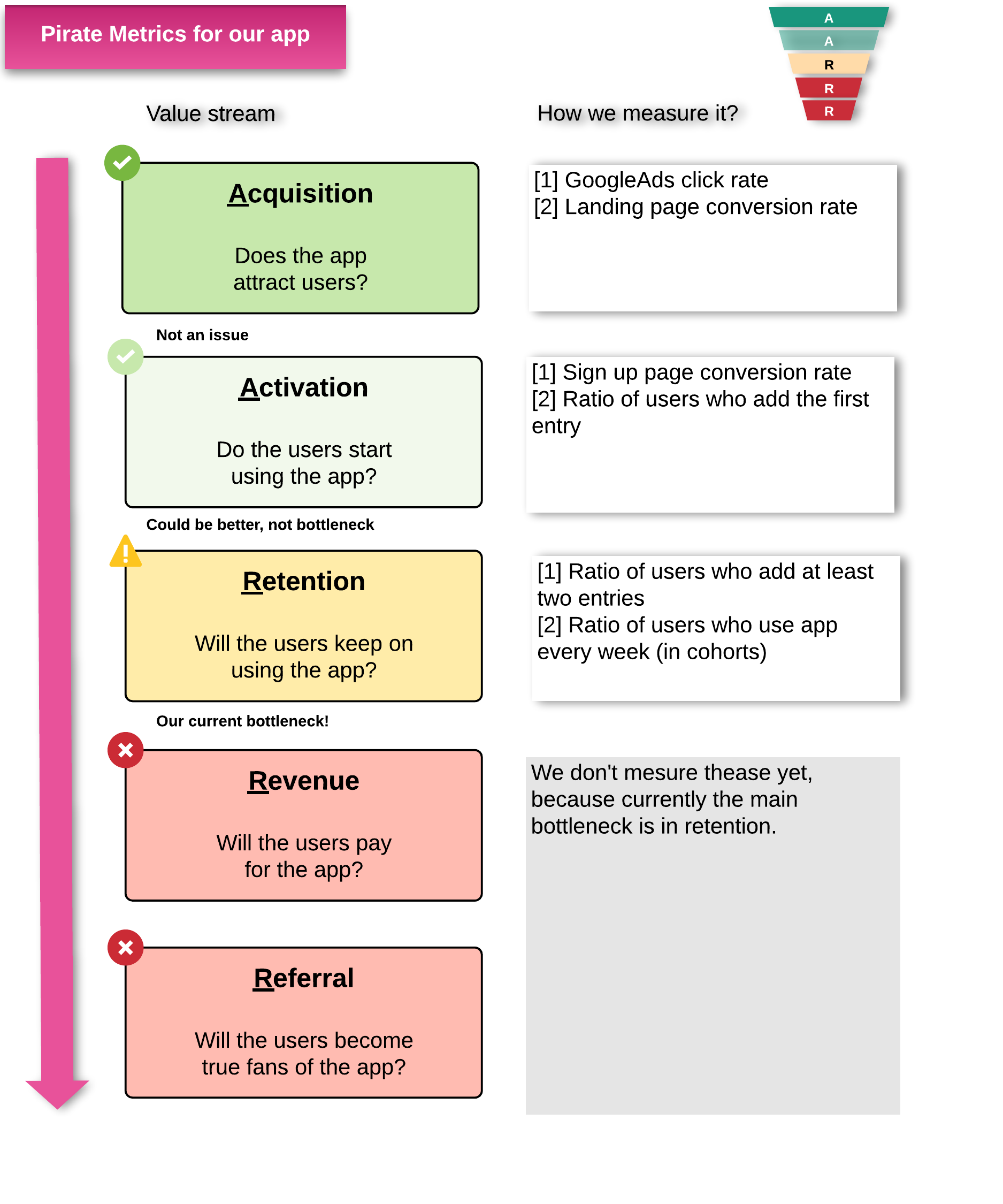

Our metrics and value stream

Currently, we have a working channel to get users in the application, and we have baseline metrics up and running so that whenever we experiment something, we can measure the impact. We have good guesses about the problem, value proposition and early adopter segment, and we have some evidence for it. At last, we know what the most critical pain point is to work on next.

As a baseline for metrics, we use Pirate metrics (Acquire, Activate, Retention, Revenue, Referral). I’m not going to explain it here, but you will find more information easily by googling Pirate metrics. We use it partly because it’s easy to find benchmark data, and partly because it is a good match for this kind of service and hence, there is no reason to reinvent the wheel. We are currently trying to work with retention, and once it’s no longer the bottleneck we will then move to Revenue. It has been hard emotionally to not head to revenue too early - if you cannot keep users in the app when it’s free it’s unlikely that they will pay for it.

Within just a bit more than 8 weeks of calendar time we have not only realized the minimum viable product, we have run 5 full scale experiments and done 4 big releases and numerous smaller ones. None of the weeks we worked fully with this app. No release required more than 5 days of work and most of them required just one or two including all technical, design and marketing effort.

So, did we practice what we preached?

I’d say that we did, more or less. No one is perfect. We have talked too little with potential users about this and their needs. But we did talk. And probably, there is a way we could know a lot more with the same effort. Nonetheless, our time investment was really small and we have active users. We are still probably overly confident about our ideas, and fail to question our intuition when we should. But we know this, and we work on our biases.

Was it enough? I don’t know. Our monthly recurring revenue - this is one of main metrics in the start up scene - is currently 0€. So, with that metric it is not yet enough. Yet, it’s too early to say.

If you think that our approach was cool and you’d like to do something similar, don’t hesitate to contact us. This is something in which we'd love to help our clients as well.

Acknowledgement

The great cover photo is taken by Jakob Owens on Unsplash